We at Quantitools strive to offer top-notch data which is cleaned and processed to be ready for analysis and can be grouped together or separated with other exchanges or markets for different analytic purposes.

Why Quantitools offers first-class data?

Granular orderbook deltas and tick by tick trades

Proprietary standardization process for combining orderbooks and trades datasets of different exchanges

Data consistency ensured by our strict validation procedure1

Redundant collectors to minimize the possibility of data loss2

By traders for traders leveraging years of experience in algorithmic trading

1We collect and store all missing events to facilitate the work of the data analyst.

2Exchange’s APIs are not 100% reliable at times. We minimize the risk of loss but there are things outside of control.

Exchanges

We are collecting our data from multiple exchanges across the globe so first we will talk about which exchanges we work with.

Data Process

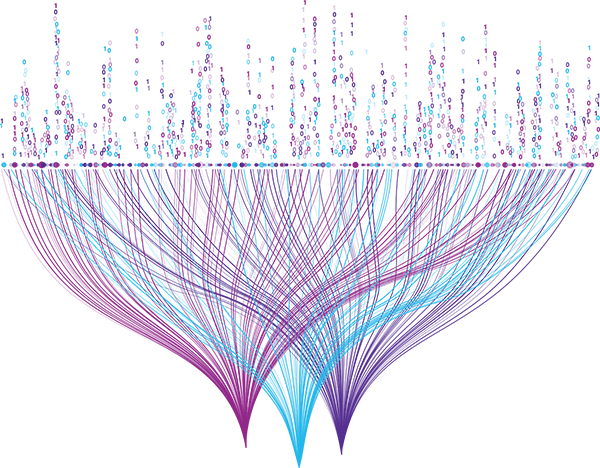

After the data is collected from one of those exchanges the data starts getting processed to be reshaped and validated and ready to be offered, in the below figure it shows a general idea of how the data is being processed.

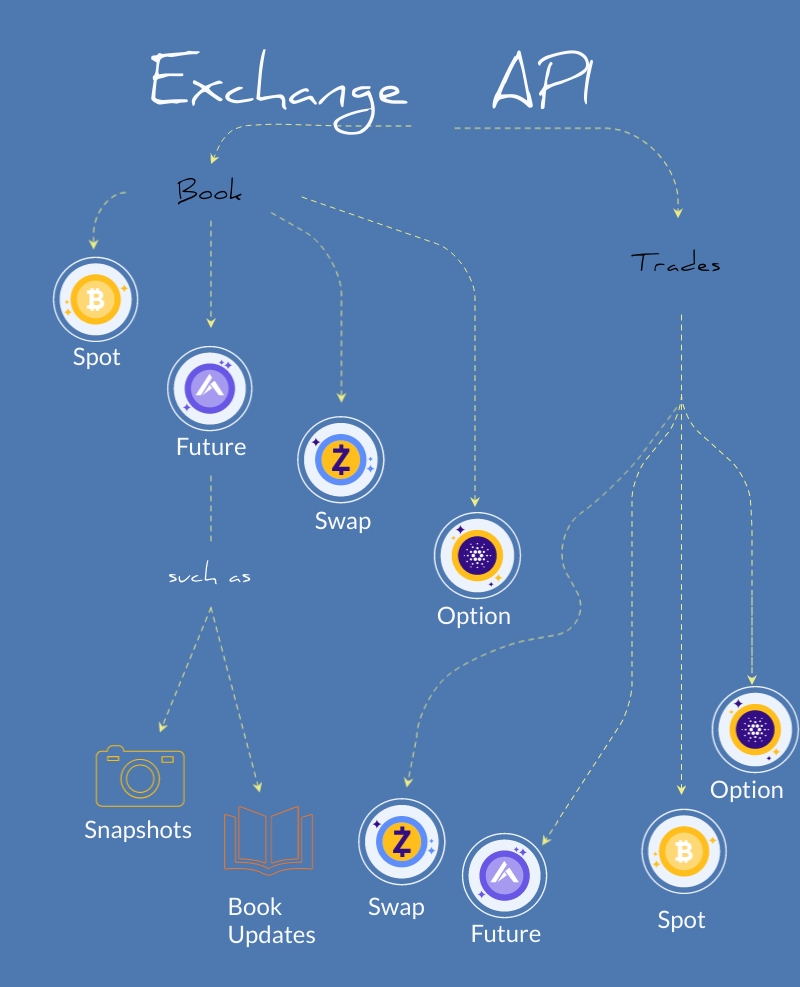

Exchange API

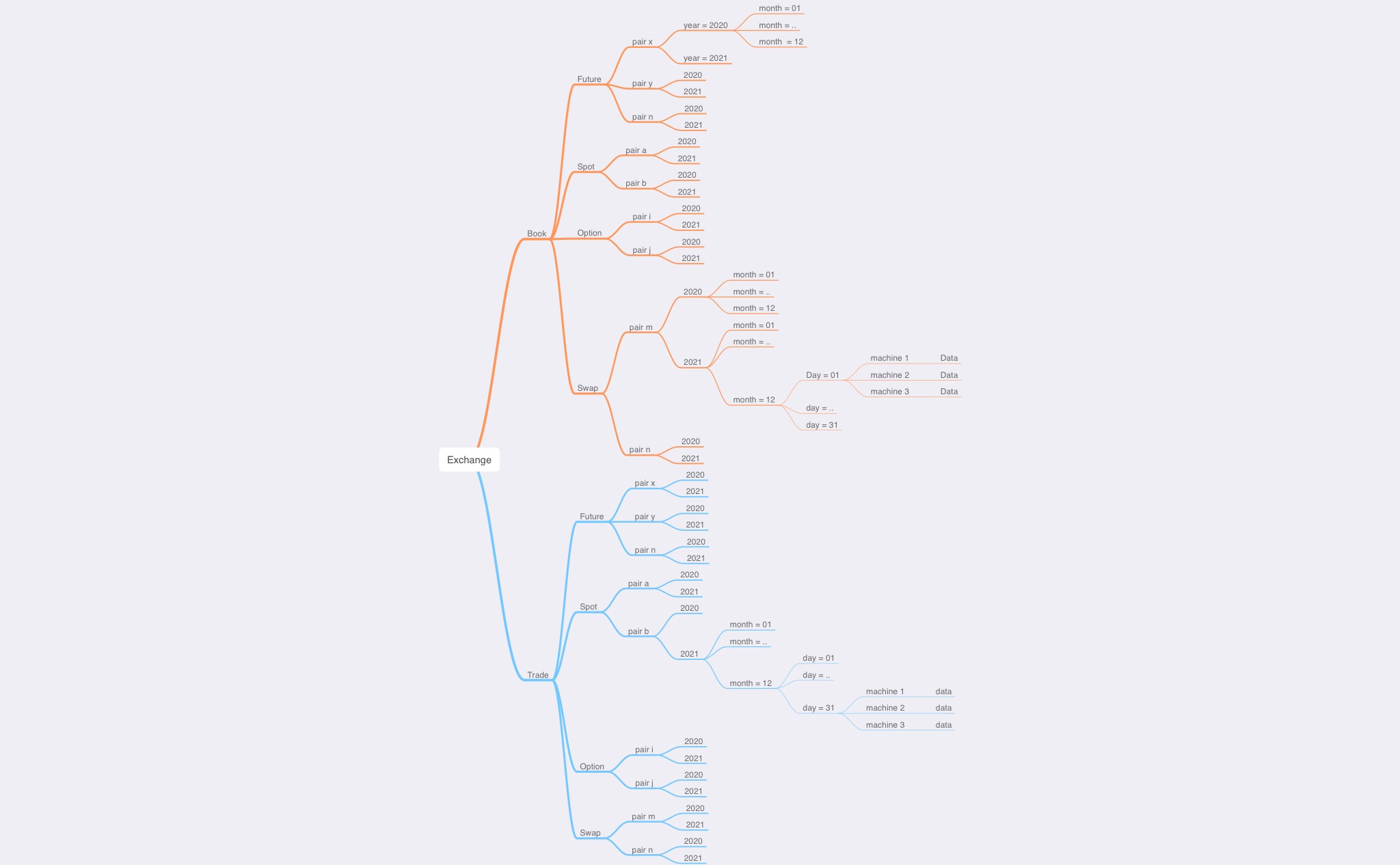

Both the raw data and the processed data are separated in a manner to offer the most granular data we can, we have separated the data by exchange then by type (Book or Trades) then by markets the exchange offers (like futures, spots, swap..etc) then we start separating them by time as in year- month – day , then within day we have all the updates and snapshots for orderbooks and all buy and sell action for trades.

All the data that is collected as shown in the figure above get through more processing to be reshaped into a single shape of data and down below is the data catalog that illustrates how the data looks like when its ready to be offered.

Data Catalog

ID

STRING

IDs that are provided by the exchange to mark an entity for it to be unique

TYPE

STRING

Type for action which may include updates, inserts, deletes, snapshots and trades

LOCAL TIMESTAMP

FLOAT

The local time provided by Quantitools machines

EXCHANGE TIMESTAMP

FLOAT

The time of the entity is provided by the exchange itself

SIDE

STRING

The action that is made whether it is bids or asks for book or buys and sells for trades

AMOUNT

FLOAT

The amount of the action

PRICE

FLOAT

The price level of the action

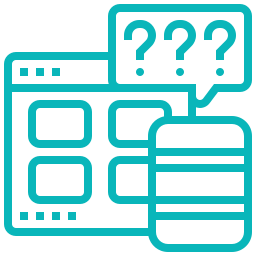

Data Validation

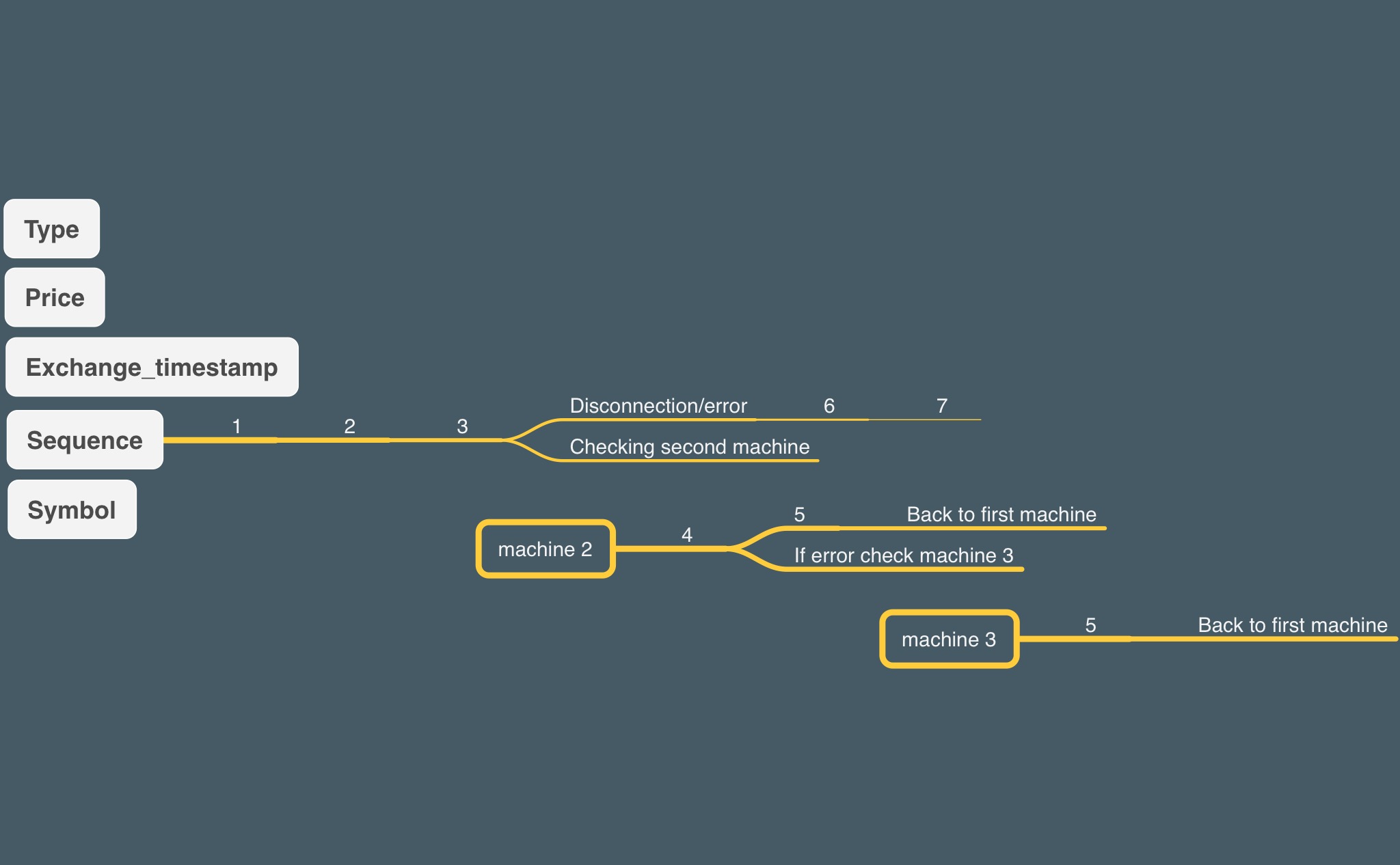

Finally it is very important to mention that we in Quantitools aim to reach confidence in the data we offer that’s why we use multiple methods to validate the data we acquire from the exchanges. One of the methods we use is sequence validation which makes sure that we don’t have any gaps in the data we get by checking the sequence numbers on every record we receive.

As shown above, the validation bot runs over the data to check if there are any gaps in the sequence of the data, if it detected any gap it starts filling those gaps from the other machines we have running on our servers then continue with the check back over the first machine and so on.